How To Scrape Sec Filings? A Comprehensive Guide

Learn how to scrape SEC filings effortlessly. Discover the power of web scraping and the SEC EDGAR API to access valuable financial data.

If you’re an investor, financial analyst, researcher, or simply someone interested in the financial health of publicly traded companies in the United States, then scraping SEC filings can be a game-changer.

The U.S. Securities and Exchange Commission (SEC) houses a treasure trove of financial and regulatory data through its Electronic Data Gathering, Analysis, and Retrieval (EDGAR) system. In this comprehensive guide, we’ll walk you through the process of scraping SEC filings effectively. Whether you’re a seasoned data scientist or just starting out, we’ve got you covered.

What are SEC Filings?

Before diving into the nitty-gritty of scraping SEC filings, it’s essential to understand what they are. SEC filings are mandatory documents submitted by publicly traded companies to the SEC.

These filings provide critical financial and business information, ensuring transparency in the financial markets.

They include quarterly and annual reports (Form 10-Q and Form 10-K), earnings releases, insider trading reports (Form 4), and more. These filings offer insights into a company’s financial health, risk factors, corporate governance, and much more.

Why You Need to Scrape SEC Filings?

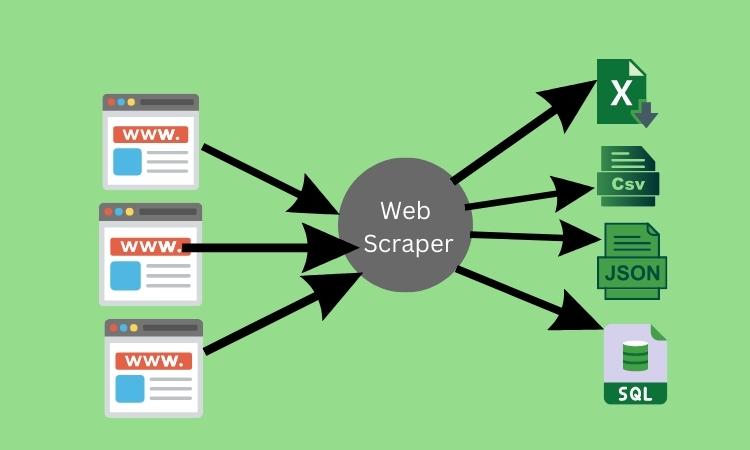

Why should you bother scraping SEC filings when you can access them on the SEC’s website? Well, automation and customization are key. Scraping allows you to automate the data collection process, ensuring you always have the latest information at your fingertips.

Moreover, you can tailor your scraping to focus on specific companies, forms, or date ranges, saving time and effort whether you’re a stock trader seeking real-time insights or an academic researcher analyzing historical data, scraping offers unparalleled convenience.

Choose the Right Tools and Technologies

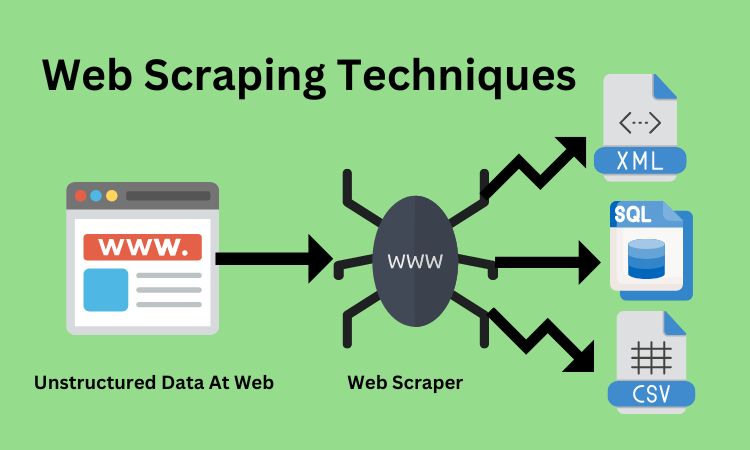

Scraping SEC filings requires the right tools and technologies. Here are some options to consider:

A-Python: Python is a popular language for web scraping, thanks to libraries like BeautifulSoup and Scrapy.

B-APIs: The SEC provides an EDGAR API for programmatic access to filings, offering structured data retrieval.

C-Third-Party Services: Several third-party providers offer SEC filing data through APIs or bulk downloads for a fee.

D-Commercial Scraping Tools: Some commercial scraping tools offer pre-built solutions for SEC filings.

We’ll delve deeper into Python and the EDGAR API in the following sections.

How To Scrape SEC Filings Using Python?

Python is a powerful and versatile language for web scraping, and it’s a favorite among data enthusiasts. Here’s a step-by-step guide to scraping SEC filings with Python:

A-Installing Necessary Libraries: Start by installing BeautifulSoup and requests libraries.

B-Accessing the SEC EDGAR Website: Use the requests library to access the SEC EDGAR website, navigating to the desired filing.

C-Parsing HTML with BeautifulSoup: Parse the HTML content of the filing page to extract the information you need.

D-Data Cleaning and Storage: Clean and organize the extracted data and save it to your preferred format (e.g., CSV, JSON, or a database).

Related: What is Price Scraping?

Scraping SEC Using EDGAR API

The SEC offers an official EDGAR API that provides programmatic access to SEC filings. It’s a structured and reliable way to retrieve data. Here’s a brief overview:

- API Key: You’ll need to obtain an API key from the SEC to access the API.

- Endpoints: The API offers endpoints for various types of data, including filings, indices, and more.

- Authentication: Use your API key for authentication when making requests.

- Data Retrieval: Query the API to retrieve filings based on criteria like company name, form type, date range, and more.

Best Practices and Tips To Scrape SEC Fillings

Before you dive into scraping SEC filings, it’s crucial to follow best practices and adhere to legal and ethical guidelines. Some tips include:

1-Respect Robots.txt: Check the SEC’s robots.txt file to ensure you’re not violating any access restrictions.

2-Rate Limiting: Implement rate limiting to avoid overloading the SEC’s servers.

3-Data Storage: Plan your data storage strategy to efficiently manage the scraped data.

4-Keep Updated: Regularly update your scraping scripts to accommodate any changes on the SEC’s website.

FAQs

Can you scrape SEC filings?

Yes, you can scrape SEC filings using web scraping techniques or by accessing the SEC EDGAR API, which provides structured data retrieval.

Is there an API to parse SEC filings on EDGAR?

Yes, the SEC offers an official EDGAR API that allows programmatic access to SEC filings, making it easier to retrieve and parse the data.

Is there an API for SEC filings?

Yes, the SEC EDGAR API provides a convenient way to access and retrieve SEC filings, making it a valuable resource for investors, analysts, and researchers.

Are SEC filings public records?

Yes, SEC filings are public records. Publicly traded companies are required to submit these filings to the U.S. Securities and Exchange Commission (SEC) to ensure transparency in financial markets.

Conclusion

Scraping SEC filings can be a powerful tool for gaining insights into the financial world. Whether you’re an investor looking to make informed decisions or a researcher aiming to uncover hidden trends, access to this data is invaluable. With the right tools and techniques, you can streamline the process and stay ahead of the curve in the world of finance.

In this guide, we’ve covered the basics, highlighted the importance, and provided practical steps to scrape SEC filings. Now, armed with this knowledge, you can embark on your data journey with confidence. Happy scraping!