10 Web Scraping Challenges You Should Know!

In this article, we’ll discuss some of the web scraping challenges you might face when web scraping and tips on overcoming them.

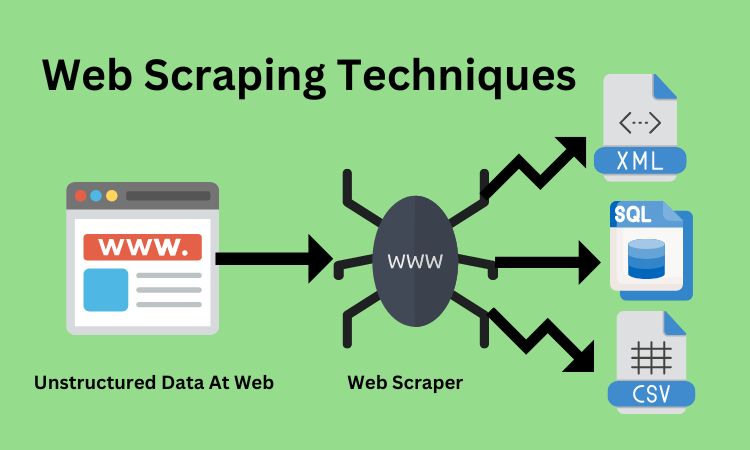

Web scraping can be difficult, especially when most website data is unstructured. It can be a great way to gather data for your business or personal use cases. But, like any process, it comes with its challenges.

In this article, we’ll discuss some of the challenges you might face when web scraping and tips on overcoming them. If you’re interested in web scraping, read on to learn more about the challenges you might face and how to overcome them.

Let’s Checkout the Top 10 Web Scraping Challenges

See How Can Alnusoft Get Web Data at a Scale & Pace!

- Web Data – unlock massive amounts web data with Alnusoft.

- Mobile Apps – Our team will scrape mobile apps for ecommerce, travel and social media.

- Data mining – Analyze big data to generate valuable insights and information.

1- Granting Access To Bots

Bots are typically used to scrape data from websites that don’t have an API or from websites that have an API that is difficult to use. Text is the most common type of data scraped from websites, but bots can also be used to scrape images, videos, and files.

The biggest challenge with web scraping is bot access. Many websites don’t want to be scraped, and they block bots from accessing their content. This can be done using several methods, including IP blocking, Captcha, and rate limits.

2- Handling Captcha Or Captcha blocking

CAPTCHA stands for “Completely Automated Public Turing test to tell Computers and Humans Apart.” It is a program designed to distinguish between humans and automated bots.

It is usually implemented as a challenge-response test where the user is presented with a distorted image of text and must enter the text to gain access to the content. We can share a few ways to handle Captcha when you are web scraping.

- Use a human to input the Captcha data manually. This can be time-consuming, but it may be the only option if you are trying to scrape data from a site that has a very strict Captcha system.

- Use an automated Captcha-solving service. This can be costly, but it may be worth it if you are trying to scrape a large amount of data.

3- Structural Changes In Websites

Structural changes in websites are also a web scraping challenge. The data fields in a website’s HTML code may move, be renamed, or be removed entirely. Even if a web scraper can find the data fields, the data within those fields may differ from what was expected. A web scraper must be able to adapt to these changes to continue extracting accurate data.

Sometimes, a website structure change may break a web scraper entirely. When this happens, the web scraper must be fixed to continue working. This can be challenging, especially if the web scraper is large and complex.

4- IP Blocking

IP Blocking is a common method used by websites to prevent unwanted traffic or bots from accessing their content. When a website detects an IP address it wants to block, it will add that IP to a blacklist. This blacklist will then be used to prevent any traffic from that IP from accessing the website.

While IP Blocking can effectively prevent unwanted traffic, it can also be a major challenge for web scraping. This is because web scrapers often rely on rotating IP addresses to avoid being blocked. If a web scraper uses a blacklisted IP address, it will be unable to access the website.

5- Real-time Latency

Real-time Latency is a challenge you must be aware of when scraping data. Data scraping from websites can be challenging and time-consuming, particularly when the target website is constantly changing or is heavily loaded with JavaScript. Moreover, real-time Latency can make it difficult to scrape data in near-real-time.

To overcome these challenges, web scraping software must adapt to changes in the target website and handle a high volume of traffic without CPU or memory issues. Some web scraping software also includes features specifically designed to address real-time Latency, such as data caching and rate limiting.

6- Dynamic Websites

One of the biggest challenges with web scraping dynamic websites is that the data you are trying to extract is constantly changing. This means the site’s content is not static; rather, it is generated on demand when a user requests it. It can make it difficult to get an accurate and up-to-date picture of the data you are trying to collect.

7- User-Generated Content

As the internet becomes increasingly user-generated, it’s also becoming more difficult to scrape web data. User-generated content (UGC) is created by users of a website rather than the website itself. This can include things like comments, reviews, and forum posts.

While UGC can be valuable data for businesses, it’s much harder to scrape than traditional web content. This is because UGC is often spread across multiple websites, making it more time-consuming and difficult to gather. In addition, UGC is often unstructured, making it more difficult to parse and extract the data you need.

8- Browser fingerprinting

When it comes to web scraping, one of the challenges you may encounter is browser fingerprinting. This is where websites can test whether a user or robot is accessing the site via a web browser.

The tests search for details about your operating system, installed browser add-ons, available fonts, timezone, and browser type and version, among other things. Combined, all this information creates a browser’s “fingerprint.”

One of the consequences of browser fingerprinting is that it can make it harder to scrape data from websites. This is because the website can detect that you are using a web scraper and block your access.

9- HTTP Request Analysis

When web scraping, you make many HTTP requests to a website to extract data. The problem is websites are getting smarter and are starting to catch on to the fact that many of these requests are coming from bots rather than real people.

This is a problem because websites can put up barriers to your web scraping process, making it more difficult for bots to access their data. One of these barriers is HTTP request analysis.

One of the main challenges is that a lot of data is included in every HTTP request. This data includes HTTP headers, client IP addresses, SSL/TLS versions, and a list of supported TLS ciphers.

Another challenge is that the structure of the HTTP request can reveal whether it is from a real web browser or a script. For example, the order of the HTTP headers can give away whether a request is from a real browser or a bot.

10- Honeypots

It is common for website owners to put honeypots on their pages to catch web scrapers. Honeypots are usually invisible links to human visitors but visible to web scrapers. Once a web scraper clicks on a honeypot link, the website can use the information it receives ( such as the scraper’s IP address) to block that scraper.

Using a web scraping tool like Octoparse can greatly reduce the chance of falling into a honeypot trap. Octoparse uses XPath to precisely locate items to click or scrape on a website page, making it less likely to trigger a honeypot trap.

Discussion Wrap-Up!

In conclusion, web scraping can be a legal and helpful way to collect data, but many challenges are involved. Businesses should be careful to avoid overloading websites when scraping data, and they should choose experienced parsers to help collect the right data.

With the right web scraping software, it is possible to overcome these challenges and extract the data you need in near-real-time.